A webinar hosted earlier today by the RSPCA looked into the potential to use artificial intelligence in the abattoir environment to improve animal welfare outcomes.

Presenter Dr Dana Campbell from Australia’s National Science Agency CSIRO outlined how recent research had explored the development if AI tools that have application for improving animal welfare outcomes during the lairage stage at processing plants.

The project work is combining the expertise of CSIRO animal scientists and data scientists to develop specialised AI technologies.

“We all know globally how much AI is being used, and the sophistication of the tech that’s out there, but teaching a computer to accurately monitor a group of animals in a pen does present special challenges,” Dr Campbell said during her presentation.

The AI research CSIRO has focussed on is specifically about computer vision – recording visual data to act as a human eye and brain in the livestock monitoring stage.

The research is using machine learning to train computers to recognise specific traits and behaviours, as well as analysing and interpreting them in a meaningful way.

“If a human can see it, a computer can be trained to also see it,” Dr Campbell told the webinar. “In this case it is about training computers to automatically recognise cattle and their behaviour, from video recordings.”

She said there were specific stages within the slaughter process where animals needed to be monitored and managed effectively to optimise the welfare experience.

A key one was during lairage, when animals are housed for a short period of time following arrival at the abattoir from their transport trucks until they are taken into the facility for slaughter.

“For cattle lairage in Australia, when the animals arrive off the transport they are housed in pens with water, but with no food. Current regulatory guidelines allow for holding without food for up to 24 hours before slaughter, but if animals need to be housed longer, then food must be provided,” she said.

Meat Standards Australia requirements are that animal are slaughtered within 48 hours of leaving their property of origin, with total transport time to not exceed 36 hours – but within this 36 hour period, a 12 hour rest period can occur.

These guidelines align with the regulated Australian Animal Welfare Standards and Guidelines for land transport of livestock. Beyond that, the Australian Meat Industry Council, representing livestock processors and meat retailers, has developed the Australian Livestock Processing Industry Animal Welfare Certification System.

These guidelines align with the regulated Australian Animal Welfare Standards and Guidelines for land transport of livestock. Beyond that, the Australian Meat Industry Council, representing livestock processors and meat retailers, has developed the Australian Livestock Processing Industry Animal Welfare Certification System.

This is a voluntary certification program, independently audited, used to demonstrate compliance with best practise animal welfare standards from when the animals arrive at the processing plant through to slaughter.

“The majority of abattoirs in Australia are certified through this program, but it is a voluntary one,” Dr Campbell said.

She said the system CSIRO had been working on was not the only AI system being developed for deployment in abattoirs, with other computer vision systems such as Argus and Lumichain currently in use – but these had been used more in the processing fabrication side, rather than in the lairage pens.

Critical period

While the period of time each animal spent in lairage was short, it was a critical time in the animal’s life, she said.

“Not only is it essential to provide optimised care and to do right by these animals, but stress experienced during this period prior to slaughter can have significant impact on meat quality – potentially devaluing the animal if the conditions it experiences in the final hours are sub-optimal.”

However providing optimised care during lairage was not always a simple process, Dr Campbell said.

There could be hundreds or thousands of animals coming through a processing facility across any given week, arriving day or night, and spending varying amounts of time in lairage pens.

Visually monitoring all these animals could be labour intensive, or in many cases, it was not feasible to do it for any extended period of time, that may then be able to inform on the welfare status of individual animals, she said.

Computer vision tools offer multiple benefits

This is where computer vision could become a supportive tool in providing automated and continuous monitoring of animals in lairage pens.

“This process has the potential to not only monitor the animals to inform on any immediate interventions that might be needed, but it may also provide insight into longer-term management or husbandry changes that might improve the environment the animal is experiencing.”

The applications and benefits of computer vision monitoring were multi-fold, Dr Campbell said.

The applications and benefits of computer vision monitoring were multi-fold, Dr Campbell said.

Firstly, they may be used to send alerts to staff that there is an issue in a particular pen that needs immediate attention.

“The use of AI camera vision systems means there are eyes on the animals at all times, picking up on things that may otherwise be missed, through periodic checks that a human observer might do.”

“It’s also a way to quantify whether there are changes that may be made longer-term, at a particular facility. For example by monitoring and collecting behavioural data on many animals across time, the facility may be able to determine whether a change in pen resources or a particular management strategy may in general result in more positive changes across all animals passing through.

Finally, the collected data could be used for regulatory or certification scheme compliance.

“If the operator has numbers on what the animals are doing in the pens, this may provide more robust and objective data for anyone conducting an audit at that facility,” she said.

Mandatory CCTV surveillance in abattoirs

The use of computer vision monitoring algorithms aligned well with the changes being implemented in beef processing facilities across Australia this year, Dr Campbell said.

It was announced early in 2025 that under the revised certification scheme, from 2026 onwards, there is to be mandatory video surveillance systems in abattoirs.

“Being able to then add algorithms to interpret that video surveillance can provide supportive monitoring tools,” she said.

CSIRO process

Dr Campbell stepped through the process that CSIRO has been engaged in, where animal behaviour and welfare scientists and computer scientists have combined, in partnership with a commercial Australian abattoir, to develop a prototype computer vision system to detect cattle behaviour.

“This will provide insight into the processes behind the development of this type of artificial intelligence, as well as the implications behind this type of system, moving forward,” she said.

The research was conducted in two lairage pens in a large commercial processing facility. Each pen was fitted with a number of cameras capturing the entire pen area, including overlap. Some were mounted over water troughs, and others could be zoomed-in, as needed.

The system was set up for continuous recording across several months, generating ‘terrabites worth’ of recorded video data.

Different types of flooring substrate were used in each pen – concrete, which was standard in the facility at the time, and woodchip bedding. This provided insight into a husbandry intervention process, informing on how the substrate type could effect cattle behaviour.

While the ultimate goal of computer vision was for automated processing, the process started with manual annotation, designed to train the computer vision processing algorithms, Dr Campbell said.

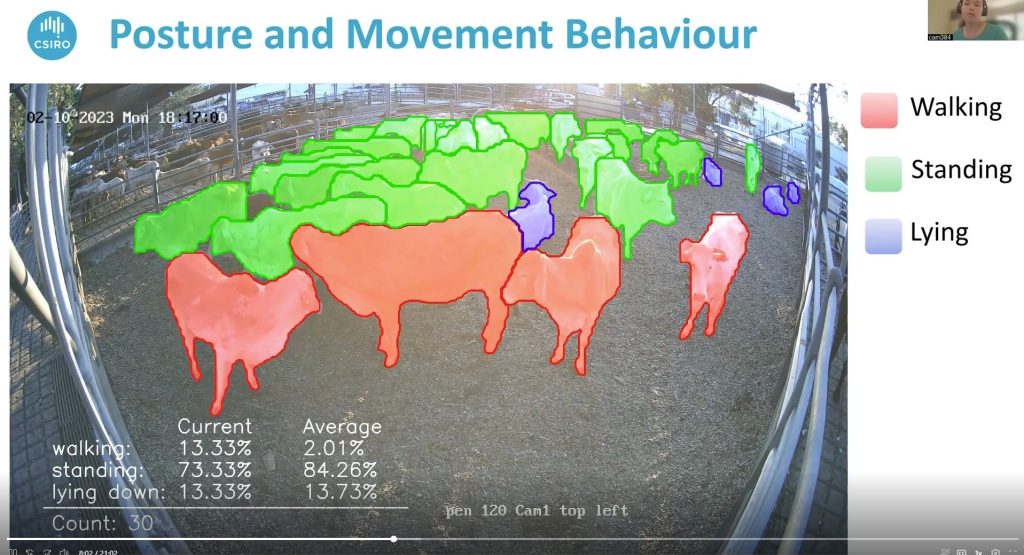

For this work, scientists manually labelled more than 800 still images taken from the video recordings, covering some 24,000 cattle, for posture and movement.

“This was used to begin training these algorithms to be able to automatically detect each beast in the video, as well as the behaviour it was exhibiting,” she said.

Three posture categories were applied – lying down, standing and walking. This measurement was important for quantifying restive behaviour, particularly for animals that may have travelled long distances.

Another metric developed was the ability to assess the amount of activity in each pen, and understanding what might be considered a ‘standard degree’ of activity, which would help identify pens showing unusually low or high levels of movement.

This could then be used to try to work out what might be causing this behaviour, and what measures may need to be implemented.

Drinking behaviour was another key metric analysed in the study. Direct focus on drinking behaviour – both looking at animals at the trough as well as those actually drinking water – was another essential part of the computer algorithm development.

This measurement can be used to confirm that animals are drinking water, and it could provide alerts to personnel if there is any unusual drinking activity detected.

This type of assessment could also be used to look at whether long term changes needed to be made – whether that be changes to water trough design or location, or water temperature.

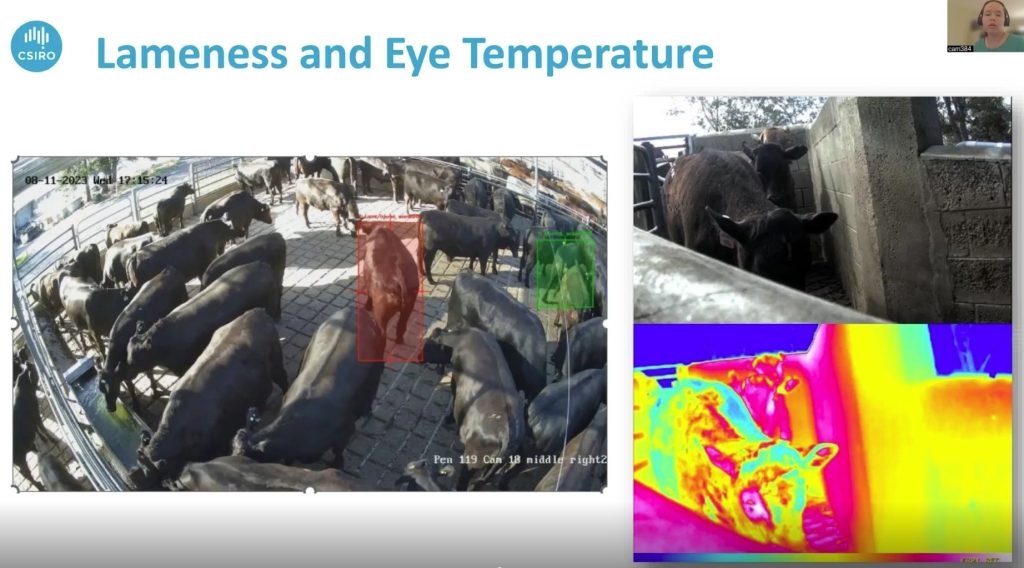

Automated detection of other behaviours that may inform on the welfare status of animals were also making progress, the webinar was told. These included detection of lameness in the lairage pens, and the use of thermal cameras to look at eye temperature, when being unloaded from the delicery truck.

Looking at eye temperature at this stage can inform on whether an animal has an elevated body temperature, which might be caused by heat stress or illness – both of which would require immediate attention by staff.

Complexities in research task

Dr Campbell highlighted some of the complexities in the creation of computer vision algorithms to monitor animals in this way.

“We all know globally how much AI is being used, and the sophistication of the tech that’s out there, but teaching a computer to accurately monitor a group of animal does present special challenges.

“In order to get clear footage of all animals in a pen, multiple cameras are required, but then the system can be faced with the challenge of interpreting what is being seen from multiple camera angles. This required complex geometry calculations.”

The system also needed to be trained across different conditions, including weather, species, size, illumination, flooring, angle and behaviour. Part of this involved creating a digital twin lairage environment, allowing simulation of variations across parameters.

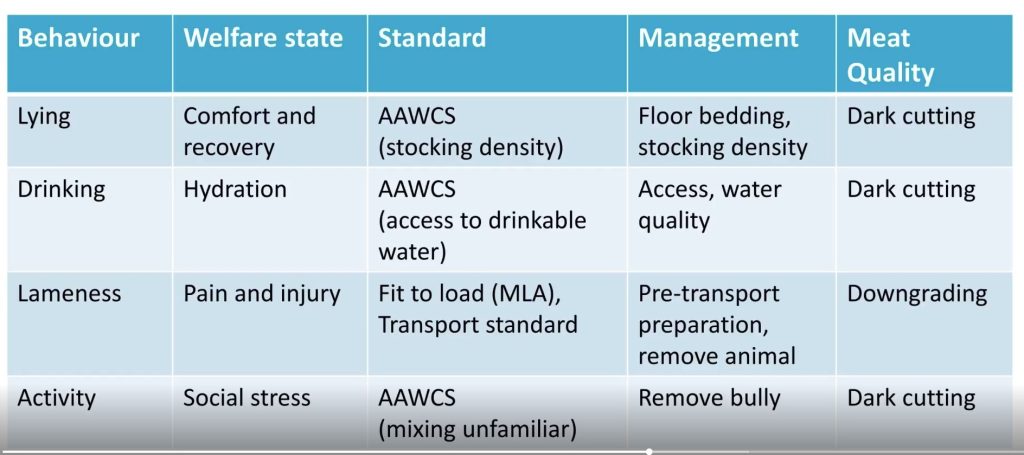

Dr Campbell presented the table below listing behaviour indicators, and some of the management interventions that might be applied.

So where to next for this type of AI monitoring technology?

“This is where the interpretation of behaviours being seen becomes really critical,” Dr Campbell said.

“Cattle arriving at a particular time of day might be prone to lying down more, or was it due to transport duration, group age or breed, for example?”

How all these variations are taken into account when making decisions on whether an action warrants attention is where an automated system would need threshholds to be programmed into the system, in order to be able to send alerts to staff informing them of the need to intervene.

“Ensuring that these threshholds are meaningful for the context it is being used for is a critical next step in the development of any computer vision system that might be deployed more widely across the processing industry.”